ReviewMeta Analysis Test: Suspicious Reviewers

At ReviewMeta we have developed a number of tests that look beyond the review itself and examine the reviewers who write the reviews. A normal reader may look at one or two reviewers’ histories if they think their reviews are suspicious, but without hundreds of hours of compiling stats, they would never be able to see the histories of all of the reviewers for a given product. It is just too labor intensive for the average reader. Luckily, at ReviewMeta we have the ability to discover meaningful statistics about reviewers’ posting histories. We examine four suspicious reviewer traits in our analysis. Each of these traits can tell us something about who is reviewing these products and taken together these traits can be very revealing.

One-Hit Wonders: These are reviewers who have written one review, which means these reviewers have only reviewed the product being analyzed. Unbiased reviewers tend to be long term members of a site who post more than a single one-off review. If a given product has a high percentage of One-Hit Wonders it can indicate that there is review manipulation occurring. While there are a number of causes might result in a One-Hit Wonder, a few common ones include: brands creating throwaway accounts to a post single fake review for their product, or brands somehow enticing people who don’t normally write reviews to review this product.

Take-Back Reviewers: Any reviewer who we have discovered to have a deleted review in their history is flagged as a Take-Back Reviewer. These reviewers are suspicious because the review was most likely removed by the review platform due to a violation of the terms of service. This may indicate that the user has been caught for review manipulation before and we don’t know for sure if they have stopped breaking the rules.

Single-Day Reviewers: Reviewers who have posted multiple reviews but have posted all of them in a single day are labeled as Single-Day Reviewers. Like One-Hit Wonders, these reviewers lose trust because they haven’t shown a long term commitment to the reviewing platform. This lack of long term commitment may be indicative that the reviewer is posting biased reviews.

Never-Verified Reviewers: These reviewers have never written a verified purchaser review. This raises a red flag because typical unbiased reviewers will review products after they purchased them. If a reviewer purchases a product from one retailer it would be odd to always write the review in different reviewing platform. This may indicate that the reviewer is not a legitimate account but rather a sockpuppet account set up by brands in order to post favorable reviews.

Each of the traits above can have a plausible explanation. Maybe that One-Hit Wonder is a legitimate reviewer who just started reviewing products online. Possibly some reviewers prefer to sit down on one day and review all of the products they bought that year. Perhaps that Take-Back Reviewer accidentally posted a review for the wrong product and didn’t want to tarnish an innocent product’s name. And maybe that Never-Verified reviewer prefers shopping in brick and mortar stores but still wants his opinion to be heard.

In order to see if any group of suspicious reviewers are benign or not we do the following for each of them (One-Hit Wonders, Take-Back Reviewers, Single-Day Reviewers, Never-Verified Reviewers):

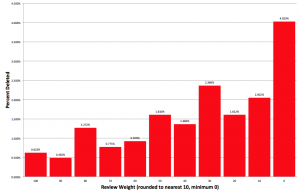

First, we check the overall percentage of the group. While it’s perfectly normal to see a small percentage of each group of Suspicious Reviewers, an excessive amount can trigger a warning or failure. Next we check to see if any group of Suspicious Reviewers has a higher average rating than all other reviews. If they do, we’ll check to see if this discrepancy is statistically significant. This means that we run the data through an equation that takes into account the total number of reviews along with the variance of the individual ratings and tells us if the discrepancy is more than just the result of random chance.(You can read more about our statistical significance tests here). If a group of Suspicious Reviewers are giving the product a statistically significant higher rating, it is strongly supports that the reviewers in that group are not benign and are unfairly inflating the overall product rating

I don’t agree with the single day reviewers. I purchase many products on amazon and I like to actually use those products before I write a review. In addition, I am a busy person so I frequently review several items in one sitting.

The Single-Day reviewers test isn’t quite how you described. It’s basically like one-hit-wonders but when every single one of their reviews is just on one day. If you post reviews on two different days, you’re not a Single-Day reviewer.

In addition to this, keep in mind this important part of the article:

First, we check the overall percentage of the group. While it’s perfectly normal to see a small percentage of each group of Suspicious Reviewers, an excessive amount can trigger a warning or failure. Next we check to see if any group of Suspicious Reviewers has a higher average rating than all other reviews. If they do, we’ll check to see if this discrepancy is statistically significant.

Just because someone is a Single-Day reviewer does not mean their reviews are automatically devalued. We have to analyze how reviewers like that are affecting the overall review picture for each product before we make any determinations.

Thanks for clarifying! I appreciate what you have done I use it frequently.

“This lack of long term commitment may be indicative that the reviewer is posting biased reviews.”

Do you know, why Amazon deleted one of my reviews? I reviewed a very simple article (don’t know anymore, which one) and was just a bit annoyed, because I didn’t find 17 words for it and I wrote this in the review. Then Amazon wrote to me, they would delete the review, because I broke the rules. Again, I was angry and it wasn’t worth to me to revise the review. I’m a critical reviewer, I wrote 38 reviews and only this one was deleted. But I’ve just 65% trust. I have to see myself as a suspicious reviewer ;-), that’s no problem to me. But to me it relativises this parameter.

Hi Anna-

No one test is perfect on it’s own, and we’re not claiming that this algorithm is 100% accurate. With over a billion data points, there are bound to be false positives and false negatives.

Thanks for your quick answer. I understand that… I just wanted you to know, how simple the reason can be, why Amazon deletes a review 😉

Thank you for your great experience.

I have a lot of verified-purchase reviews, but in your analysis my profile is signed as “never verified reviewer”.

How possible is this???

With hundreds of millions of reviewers in our system, we sometimes aren’t looking at every single review of every single user. This will be corrected as the system crawls more data.