ReviewMeta Analysis Test: Phrase Repetition

One of the most obvious ways to detect suspicious reviews is analyzing the language used within the body of each review. While it’s difficult for us to draw any conclusions from the language of a single review by itself, looking at the aggregate data can help us to identify which reviews might have been created unnaturally.

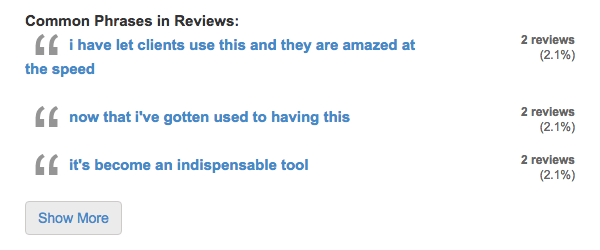

Our process for the Phrase Repetition test is a little more involved than other tests. In a nutshell, we compile a list of phrases that are used across multiple reviews for a given product, then identify which reviews contain these common phrases, and finally compare their average rating to the average rating of reviews which don’t contain these common phrases.

To compile the list of repeated phrases, we start by looking for phrases of 3 or more words that appear in multiple different reviews for the same product. We also have a formula to make sure the phrase is somewhat substantial. For example, the three-word phrase “it was the” is not substantial, while “surpassed all expectancies” would be considered substantial. Our formula takes into account the phrase length, complexity and type of words being used to make sure that each phrase on the list is more than just a string of prepositions, indefinite articles and pronouns commonly used in everyday English.

Once we have our list of repeated phrases, we check each review to see if (and how often) they are using these phrases. We assign each review a score, taking into account factors like word count, number of repeated phrases found and the substantiality of those phrases. A low score would indicate that there are few or no repetitive phrases used in that review. Reviews that surpass a certain threshold are flagged as using repetitive phrases.

If there are a higher number of reviews that use repetitive phrases, it can be an indication that the reviews are not created naturally. However, there’s still plenty of valid reasons we’d see repeated phrases that might not necessarily mean the reviews are biased. For example, you may see multiple reviewers mention features of the product which are necessary to write a thorough review. However, if several reviewers are perfectly regurgitating the same word-for-word marketing language verbatim, it might be a sign that these reviews were from hired guns.

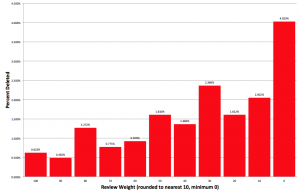

In order to determine if these reviews are malicious or benign, we group all reviews with repetitive phrases and check their overall percentage. While it’s not immediately problematic to see a small percentage of reviews with repetitive phrases, an excessive amount can trigger a warning or failure. Next, we check to see if reviews with repetitive phrases have a higher average rating than reviews without repetitive phrases. If they do, we’ll check to see if this discrepancy is statistically significant. This means that we run the data through an equation that takes into account the total number of reviews along with the variance of the individual ratings and tells us if the discrepancy is more than just the result of random chance. (You can read more about our statistical significance tests here). If the reviews with repetitive phrases have a significantly higher rating than reviews without repetitive phrases, it strongly supports that the reviews with repetitive phrases are not benign and are unfairly inflating the overall product rating.

Regarding your Rating Trend data, in my warning flag for a product I looked up, the average high volume day rating was much lower than the normal volume day rating so I’m not sure what’s going on here as in your description you say you “check to see if reviews created on high volume days have a higher average rating than reviews created on normal-volume days.”

Of the 4 days that were flagged as high volume days, all had 3 reviews each, but only one of the 4 days had a daily average rating higher than the normal day average. The difference between high volume average and the normal day average was definitely large enough to be statistically significant, but only when considering lower than average reviews. Here is the page link if you need to look into it further: https://reviewmeta.com/amazon/B00H416L6U

It looks like in this case, there is only a “warn”, not a fail for this test. There’s only 12 reviews of 239 and there is no statistically significant difference in the ratings.

It seems like most products that I have looked at on here have “Customer Service” as a repetitive phrase. Is this really considered a substantial phrase with any products?

Hi Kathy-

The phrase “Customer Service” isn’t always going to be flagged as repetitive. It depends on how many reviewers are mentioning it for that product along with the other words that are used in that review. If the review is only a sentence, it’s more likely to get flagged for having substantial repeated phrases.

Also, in my experience, when I see a lot of reviews mention “customer service”, it’s an immediate red flag in my book.

It would be great if the product names weren’t counted as substantial phrases, but I don’t know if that’s really possible

Yes and no. To some extent, using the product name word for word in the review might be a real red flag. What if all reviews said “I loved this SureBrand® ExtraCare™ Pillow Case Lite© and enjoy it every day”. Using the exact product name over and over like that could definitely be a red flag.

However, sometimes it could be completely benign. That judgement call is a bit out of my ability to write computer code for, and that’s why I have all the info displayed to let everyone read it and make the call themselves.

Keep in mind there’s a bit more to the test than just looking for the repeated phrases – it checks to see if reviews with those phrases are higher on average than reviews without the phrases. If the two groups give the products roughly the same rating, then it’s not quite as large of a flag.

I agree but I think it shouldn’t be too hard to separate the two? In a check I made for an Argan Oil, 2 of the phrases flagged were “using argan oil” and “great argan oil”.

I imagine this would be a common reoccurring problem as I would think a majority of people would want to refer to the product article when writing a review; if a majority of product articles are also comprised of 2 or more words, finding 2 reviews which both use the same complex word in conjunction with the product article would be quite likely. Unless the complex word is particularly uncommon.

I’m not 100% sure if this would work but perhaps using a dictionary lookup table for nouns? Maybe I’m making too many assumptions, but aren’t all products generally a thing, surrounded by a description that further identifies the product – branding (unlisted proper nouns) or adjectives. idk perhaps a rule that listed nouns found in the title are ignored from the review as complex words, or something like that. If not ignored, maybe downgrade their statistical significance so it requires more repetitions of the phrase to be flagged.

Another way might be to strip out the listed nouns and then run a check for wikipedia URLs containing each noun and its preceding word. Words like Argan might not be listed as nouns but Oil is and this would be reflected by an entry for Argan Oil on wikipedia. SureBrand might have a wiki entry of its own, but it’s unlikely SureBrand Pillow Case would unless the term was popularly synonymous with the thing or it was generally considered important by many people – like Sony Walkman, for example.

It works fine in my head until I see all the times it doesn’t but

but

No simple solution and I greatly appreciate and admire the work you’ve done here and especially the way it’s presented (gimme all those lovely details). It’s all very fair and open to further exploration under the hood, something Fakespot doesn’t do. P.S. this isn’t a fake review ;p